Ten Best Ways To Sell Deepseek

페이지 정보

본문

Reuters reports: DeepSeek couldn't be accessed on Wednesday in Apple or Google app shops in Italy, the day after the authority, identified also as the Garante, requested information on its use of private knowledge. This strategy permits us to repeatedly enhance our knowledge throughout the lengthy and unpredictable training course of. POSTSUPERSCRIPT till the mannequin consumes 10T coaching tokens. 0.3 for the first 10T tokens, and to 0.1 for the remaining 4.8T tokens. POSTSUPERSCRIPT in 4.3T tokens, following a cosine decay curve. POSTSUPERSCRIPT to 64. We substitute all FFNs except for the first three layers with MoE layers. At the big scale, we prepare a baseline MoE mannequin comprising 228.7B whole parameters on 540B tokens. At the big scale, we train a baseline MoE mannequin comprising 228.7B complete parameters on 578B tokens. Each MoE layer consists of 1 shared expert and 256 routed specialists, the place the intermediate hidden dimension of every professional is 2048. Among the many routed consultants, eight specialists shall be activated for each token, and every token will probably be ensured to be despatched to at most four nodes. We leverage pipeline parallelism to deploy totally different layers of a mannequin on completely different GPUs, and for each layer, the routed experts can be uniformly deployed on sixty four GPUs belonging to eight nodes.

Reuters reports: DeepSeek couldn't be accessed on Wednesday in Apple or Google app shops in Italy, the day after the authority, identified also as the Garante, requested information on its use of private knowledge. This strategy permits us to repeatedly enhance our knowledge throughout the lengthy and unpredictable training course of. POSTSUPERSCRIPT till the mannequin consumes 10T coaching tokens. 0.3 for the first 10T tokens, and to 0.1 for the remaining 4.8T tokens. POSTSUPERSCRIPT in 4.3T tokens, following a cosine decay curve. POSTSUPERSCRIPT to 64. We substitute all FFNs except for the first three layers with MoE layers. At the big scale, we prepare a baseline MoE mannequin comprising 228.7B whole parameters on 540B tokens. At the big scale, we train a baseline MoE mannequin comprising 228.7B complete parameters on 578B tokens. Each MoE layer consists of 1 shared expert and 256 routed specialists, the place the intermediate hidden dimension of every professional is 2048. Among the many routed consultants, eight specialists shall be activated for each token, and every token will probably be ensured to be despatched to at most four nodes. We leverage pipeline parallelism to deploy totally different layers of a mannequin on completely different GPUs, and for each layer, the routed experts can be uniformly deployed on sixty four GPUs belonging to eight nodes.

As DeepSeek-V2, DeepSeek-V3 additionally employs extra RMSNorm layers after the compressed latent vectors, and multiplies further scaling elements on the width bottlenecks. The tokenizer for DeepSeek-V3 employs Byte-level BPE (Shibata et al., 1999) with an extended vocabulary of 128K tokens. The pretokenizer and training data for our tokenizer are modified to optimize multilingual compression effectivity. Hybrid 8-bit floating level (HFP8) training and inference for deep neural networks. Note that during inference, we instantly discard the MTP module, so the inference costs of the compared fashions are precisely the same. Points 2 and 3 are principally about my monetary assets that I don't have obtainable in the meanwhile. To deal with this problem, researchers from DeepSeek, Sun Yat-sen University, University of Edinburgh, and MBZUAI have developed a novel approach to generate massive datasets of synthetic proof knowledge. LLMs have memorized all of them. We examined four of the highest Chinese LLMs - Tongyi Qianwen 通义千问, Baichuan 百川大模型, DeepSeek 深度求索, and Yi 零一万物 - to assess their means to reply open-ended questions about politics, legislation, and history. As for Chinese benchmarks, except for CMMLU, a Chinese multi-subject multiple-alternative task, DeepSeek-V3-Base additionally reveals higher efficiency than Qwen2.5 72B. (3) Compared with LLaMA-3.1 405B Base, the biggest open-source mannequin with eleven times the activated parameters, DeepSeek-V3-Base also exhibits a lot better performance on multilingual, code, and math benchmarks.

As DeepSeek-V2, DeepSeek-V3 additionally employs extra RMSNorm layers after the compressed latent vectors, and multiplies further scaling elements on the width bottlenecks. The tokenizer for DeepSeek-V3 employs Byte-level BPE (Shibata et al., 1999) with an extended vocabulary of 128K tokens. The pretokenizer and training data for our tokenizer are modified to optimize multilingual compression effectivity. Hybrid 8-bit floating level (HFP8) training and inference for deep neural networks. Note that during inference, we instantly discard the MTP module, so the inference costs of the compared fashions are precisely the same. Points 2 and 3 are principally about my monetary assets that I don't have obtainable in the meanwhile. To deal with this problem, researchers from DeepSeek, Sun Yat-sen University, University of Edinburgh, and MBZUAI have developed a novel approach to generate massive datasets of synthetic proof knowledge. LLMs have memorized all of them. We examined four of the highest Chinese LLMs - Tongyi Qianwen 通义千问, Baichuan 百川大模型, DeepSeek 深度求索, and Yi 零一万物 - to assess their means to reply open-ended questions about politics, legislation, and history. As for Chinese benchmarks, except for CMMLU, a Chinese multi-subject multiple-alternative task, DeepSeek-V3-Base additionally reveals higher efficiency than Qwen2.5 72B. (3) Compared with LLaMA-3.1 405B Base, the biggest open-source mannequin with eleven times the activated parameters, DeepSeek-V3-Base also exhibits a lot better performance on multilingual, code, and math benchmarks.

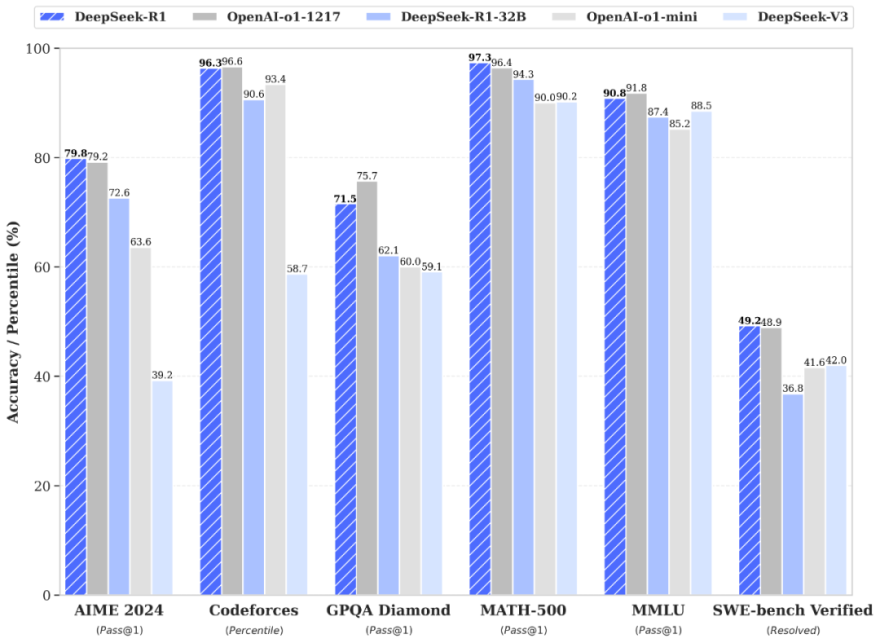

Overall, DeepSeek-V3-Base comprehensively outperforms DeepSeek-V2-Base and Qwen2.5 72B Base, and surpasses LLaMA-3.1 405B Base in nearly all of benchmarks, basically becoming the strongest open-supply mannequin. In Table 3, we examine the base mannequin of DeepSeek-V3 with the state-of-the-art open-source base models, including DeepSeek-V2-Base (DeepSeek-AI, 2024c) (our previous release), Qwen2.5 72B Base (Qwen, 2024b), and LLaMA-3.1 405B Base (AI@Meta, 2024b). We evaluate all these fashions with our internal analysis framework, and be sure that they share the identical analysis setting. From a extra detailed perspective, we examine DeepSeek-V3-Base with the other open-source base fashions individually. Nvidia started the day because the most precious publicly traded stock in the marketplace - over $3.4 trillion - after its shares greater than doubled in every of the past two years. Higher clock speeds additionally enhance immediate processing, so intention for 3.6GHz or extra. We introduce a system immediate (see below) to guide the model to generate solutions inside specified guardrails, just like the work done with Llama 2. The prompt: "Always help with care, respect, and reality.

Following our previous work (DeepSeek-AI, 2024b, c), we adopt perplexity-based mostly analysis for datasets including HellaSwag, PIQA, WinoGrande, RACE-Middle, RACE-High, MMLU, MMLU-Redux, MMLU-Pro, MMMLU, ARC-Easy, ARC-Challenge, C-Eval, CMMLU, C3, and CCPM, and adopt generation-based mostly analysis for TriviaQA, NaturalQuestions, DROP, MATH, GSM8K, MGSM, HumanEval, MBPP, LiveCodeBench-Base, CRUXEval, BBH, AGIEval, CLUEWSC, CMRC, and CMath. And if by 2025/2026, Huawei hasn’t gotten its act collectively and there simply aren’t a number of high-of-the-line AI accelerators so that you can play with if you work at Baidu or Tencent, then there’s a relative trade-off. So yeah, there’s loads developing there. Why this issues - so much of the world is less complicated than you assume: Some parts of science are hard, like taking a bunch of disparate ideas and arising with an intuition for a approach to fuse them to learn something new about the world. A simple technique is to use block-sensible quantization per 128x128 elements like the way we quantize the mannequin weights. 1) Compared with DeepSeek-V2-Base, as a result of enhancements in our model architecture, the size-up of the mannequin size and coaching tokens, and the enhancement of information quality, DeepSeek-V3-Base achieves significantly better performance as expected. On high of them, holding the training information and the other architectures the same, we append a 1-depth MTP module onto them and practice two models with the MTP strategy for comparability.

To find more info about deep seek have a look at the webpage.

- 이전글Believe In Your Deepseek Skills But Never Stop Improving 25.02.01

- 다음글Improve(Enhance) Your Deepseek In three Days 25.02.01

댓글목록

등록된 댓글이 없습니다.